TL;DR

So, ADEs are everywhere now.

A year ago, this whole "AI coding" thing felt like people duct-taping terminal sessions together and praying git push wouldn't nuke their repo. Fast forward to today, and what started as a few unhinged experiments around Claude Code and Codex has mutated into a legitimate software category. The tools are placing massive bets on worktrees, sub-agents, and how much GUI abstraction we actually want before we beg to go back to the terminal.

When everyone starts arguing about worktree isolation instead of just token generation speed, you know a category has gotten serious.

What even is an ADE?

An ADE is an agentic development environment. At the simplest level, it is where you manage coding agents. A better definition is this: an ADE helps you run multiple long-running software tasks in parallel without losing context, breaking state, or creating git chaos.

Legacy IDEs (yeah, I’m calling them legacy now) were built around the assumption that you are the one writing the code.

ADEs are built around a different assumption. The agents write the code. You spend your time deciding the architecture, verifying the output, and steering five different work streams at once. It's less about typing speed and more about human judgement scaling LLM output.

Karpathy nailed it: the IDE isn't dead, it's just being refactored into a higher-level command center where the "unit of interest" shifts from the file to the agent. This is peak agent-maxxing.

X post

Expectation: the age of the IDE is over Reality: we’re going to need a bigger IDE (imo). It just looks very different because humans now move upwards and program at a higher level - the basic unit of interest is not one file but one agent. It’s still programming.

Andrej Karpathy on XThe workflow shift that matters

That is why worktrees matter so much here. If you have two agents raw-dogging the same repo, they need isolation. If Agent A is debugging a nasty frontend regression and Agent B is vibecoding a database migration, the environment has to stop them from stepping on each other. The ADEs that get this feel like the future. The ones that don't? They just feel like a ChatGPT window glued to VS Code.

Why is this happening right now?

Models got better. Harnesses got better. Agents can stay on task longer. They can inspect codebases, run commands, create pull requests, respond to review comments, and keep working without constant babysitting.

Once that became true, the old interface started to feel wrong.

When your bottleneck shifts from "how fast can I type" to "how many agents can I orchestrate before I lose track of what's happening," you need a new interface. You need to see which task is running, which branch it’s mutating, what the CI/CD pipeline thinks about it, and when to step in and take the wheel.

The build time is rarely what holds me up. It is always the design; how should we architect this? What should this feel like? How should this look? Is that transition subtle enough? How composable should this be? Is this the right metaphor?

— Maggie Appleton, Gas Town

The throttle on software output is no longer implementation speed. It's the quality of human judgement upstream of the agents.

That is the actual job now. Welcome to software engineering in 2026.

The 3 Flavors of ADEs

I've been playing with basically everything in this space, and they all fall into three distinct buckets.

| Type | What it is | Trade-off |

|---|---|---|

| Native wrapper | These just slap a polished GUI on top of Claude Code, Codex, or whatever lab-native CLI you prefer. | When done right, it's the god-tier setup: you get the native model behaviors (which are usually better) but with sane ergonomics for handling diffs, PRs, and parallel branches without losing your mind. |

| Own harness | These madlads build their own agentic runtime and route all models through it. | You get insane flexibility and can plug-in any provider. The downside? Sometimes the model behaves like a fish out of water because it isn't running in the exact harness the lab trained it for. |

| Terminal-first | Essentially just tmux on steroids. Upgraded terminal environments optimized for agent orchestration. | It's TUI-morphism at its finest. Power users (myself included) love the lack of abstraction, but it definitely lacks the hand-holding that non-terminal-sickos need for review loops. |

That split matters because two tools can look similar on a screenshot and still feel very different after an hour of real work.

What I actually care about when judging these tools

I don't give a damn about which tool has the longest feature list. The only question that matters is: "Which tool makes running 5 agents in parallel feel calm?"

For me, that mostly comes down to five things.

- Worktree and branch flow. If the product makes isolated parallel work easy, that is a huge win.

- Git visibility. Diffs, checks, PR state, and review comments need to be obvious.

- Harness quality. Native CLI behavior still matters. Some models are simply better inside their home environment.

- Performance. A beautiful ADE that becomes sluggish after twenty minutes is still a bad ADE.

- Flexibility. Some people want one opinionated path. Others want every model and CLI available in one place.

Once you look at the category through that lens, the products become much easier to compare.

Conductor: still one of the best UXs, with trade-offs

Conductor probably has the best overall UX in the space right now.

X post

Conductor probably has the best overall UX in the space right now.

It is opinionated, polished, and easy to understand quickly. I think that matters more than a lot of technical people want to admit. Most developers do not want to spend half a day configuring their environment before they get any value. Conductor makes the core workflow feel obvious.

Where Conductor stands out

That said, I keep running into the same issue: performance. Once I have been in it for a while, especially with several active threads, the UI gets noticeably choppier. In an orchestration tool, lag matters more than it does in a normal editor.

The other issue is model asymmetry. The Claude experience is much richer than the OpenAI one. And right now, if you think GPT-5.4 is one of the strongest coding models available, that matters.

My view is simple: Conductor is still very good, but polish alone is not enough anymore.

Superset: the best balance right now

Superset takes a different approach and, in my experience, lands in a better place overall.

It keeps the same broad benefits you want from an ADE, but gives you more flexibility without turning everything into chaos. You can run different CLIs, split terminals, manage project-specific commands, and still keep a coherent workspace around git state, files, and checks.

That combination matters.

For me, Superset feels like the product that best understands what serious users actually want: not a magical abstraction layer that hides the tools, but a better operating surface for the tools they already trust.

It also helps that performance has felt more stable. In this category, consistency is a feature. If the app stays fast while you juggle agents, dev servers, and multiple projects, that is not a small implementation detail. It is the point.

The fact that it is open source is a bonus, but not the reason I like it. I like it because it gets the balance right.

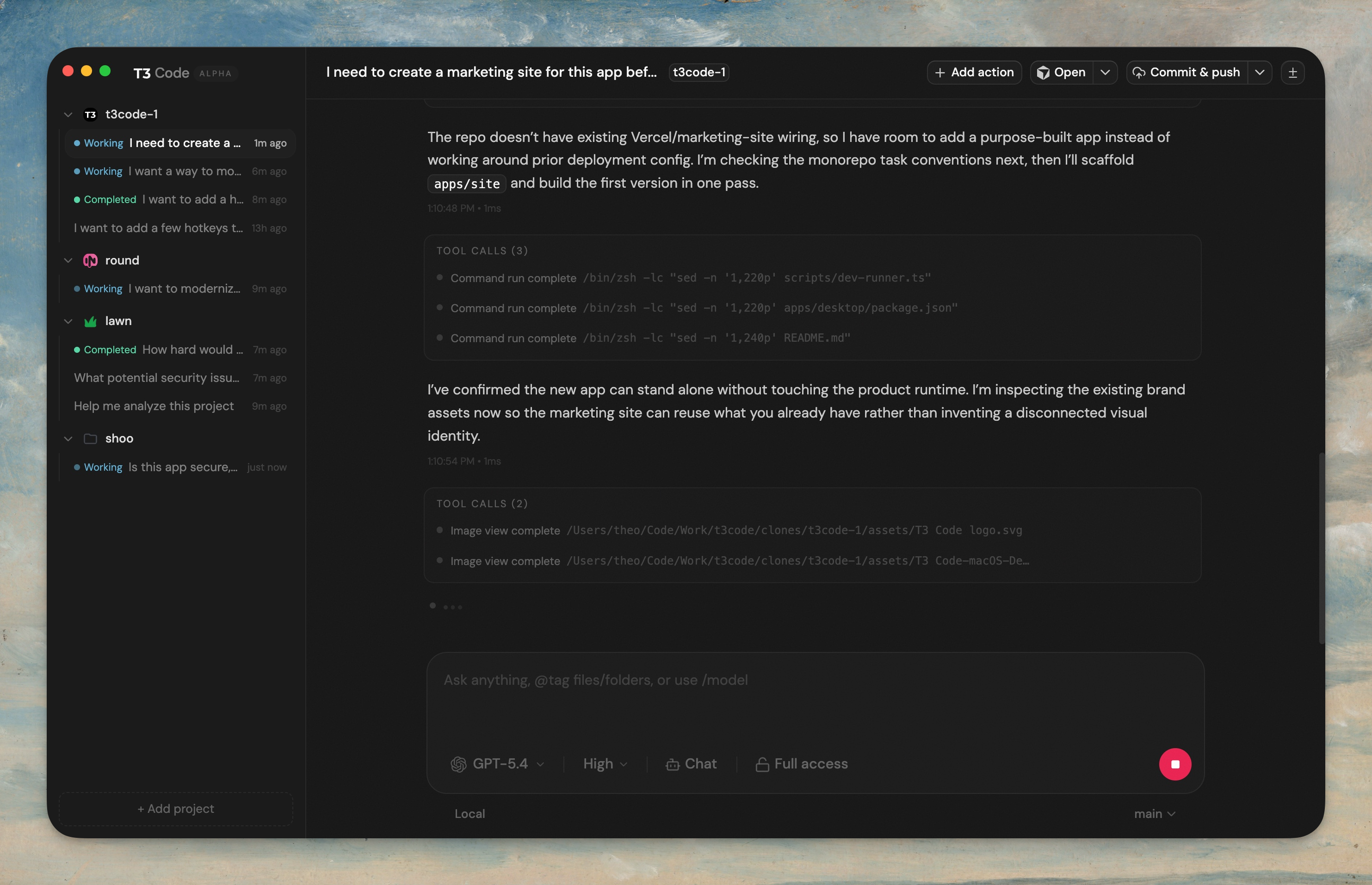

T3 Code: early, promising, and worth watching

T3 Code is still early, but it is one of the more interesting entries.

The appeal is obvious. Theo and his team have strong product instincts, the UI direction is solid, and the open-source gravity around the project means iteration should be fast. It also sits in a sensible lane: it is not trying to replace the underlying tools entirely, but it is not just exposing a raw terminal either.

Right now it still feels alpha. That is fine. Early products should feel early.

What matters is that the shape of the thing makes sense. If they keep improving performance and land broader harness support cleanly, it could become a serious contender in this space very quickly.

Solo: underrated because it does less, but does it cleanly

Solo is a good reminder that not every ADE needs to be maximalist.

It feels lighter, simpler, and more operational. Created by Aaron Francis, it is good at spinning up the moving parts around a project, keeping those processes grouped, and letting you bring agents into that setup without forcing a worktree-first worldview on you.

That means it will not be everyone's favorite. But I think it is underrated precisely because it has restraint. A lot of these products are trying to be everything at once. Solo feels like it knows what it wants to be.

OpenCode and Codex: two very different strengths

OpenCode (now has desktop app) is compelling because it is flexible and open. If you want to experiment across models and providers, it gives you a lot of room.

X post

Now in @opencode desktop.

But there is still a real difference between using a model in a third-party harness and using it in the environment it was designed around. Native behavior still matters, especially for longer-running tasks.

Codex, on the other hand, is strong precisely because it is native. The product is focused, the UI is good, the sub-agent story is improving, and OpenAI has generally been more permissive about letting people use subscriptions across surfaces.

X post

You can now summon Codex from anywhere on your desktop. It's easier than ever to reach for Codex.

X post

GPT-5.4 mini matters for subagents because it changes what feels worth handing off. The parent thread should hold the architecture, plan, and progress narrative. Fast subagents can explore the repo, check hypotheses, and preserve the parent thread's limited attention.

The trade-off is obvious: it is a more closed ecosystem. If you want everything in one place, it will feel limiting. If you want the cleanest OpenAI-native experience, it is easy to recommend.

The rest of the field

The rest of the market is messy, which is exactly what you would expect from a category this young.

Collaborator

Collaborator is brand new and in alpha, and honestly, I'm into the canvas approach. It is basically pure agent-maxxing. You open a project and get terminal tiles you can arrange freely. Spawn a Claude code here, a dev server there, a browser pane over there. It is more chaotic than the structured ADEs, but for anyone trying to maximize their orchestration leverage, this is the play.

Theo floated a similar idea recently around canvassed, infinite-scroll dev environments. Whether this developer was inspired by that or not, it is exploring a direction nobody else is seriously touching right now. It is still in its infancy, but the core idea is exactly where things are headed.

CMUX

CMUX is ghosty with a sidebar. That is reductive but mostly accurate. Split terminals, tabs, a built-in browser, multiple projects in one place. Fully open source. You can run Claude Code, Codex, Pi, or Open Code directly inside it.

If you prefer a raw terminal experience and just want better project management around it, CMUX is great. I personally lean Superset because it offers more structure without hiding the tools. But as a terminal-first environment, CMUX is excellent. It just is not trying to be a full ADE in the way the others are.

Emdash

X post

Emdash is open source and YC-backed, which sounds promising on paper. In practice, it feels like it is chasing a shape other products already execute better. The layout is near-identical to Conductor. They told me on X they were not inspired by Conductor, which confused me.

You do get flexibility. Any agent, multiple terminals, a built-in browser, a file tree, PR creation, CI checks in the UI. The basics are all there. But when I want polish, I reach for Conductor. When I want flexibility, I reach for Superset. Emdash lands somewhere in the middle without being the best at either.

They recently added SSH into remote machines, which could be a differentiator. But I have not found myself drawn to it when the other options are this strong.

Cursor Glass

Cursor dropped their new Glass UI right after I finished recording my original take. It is essentially a full-blown ADE now. Simplified layout, projects on the left, diff viewer on the right, a built-in browser that is one of the best implementations I have seen in a dev environment, and a marketplace for plugins that makes it quick to get started.

The simplification was the right call. It pushes Cursor squarely into ADE territory. The downside is the same one it has always had: you pay a premium for inference compared to what you get from a direct lab subscription. Some people swear by it anyway, and I understand why. The product maturity is real.

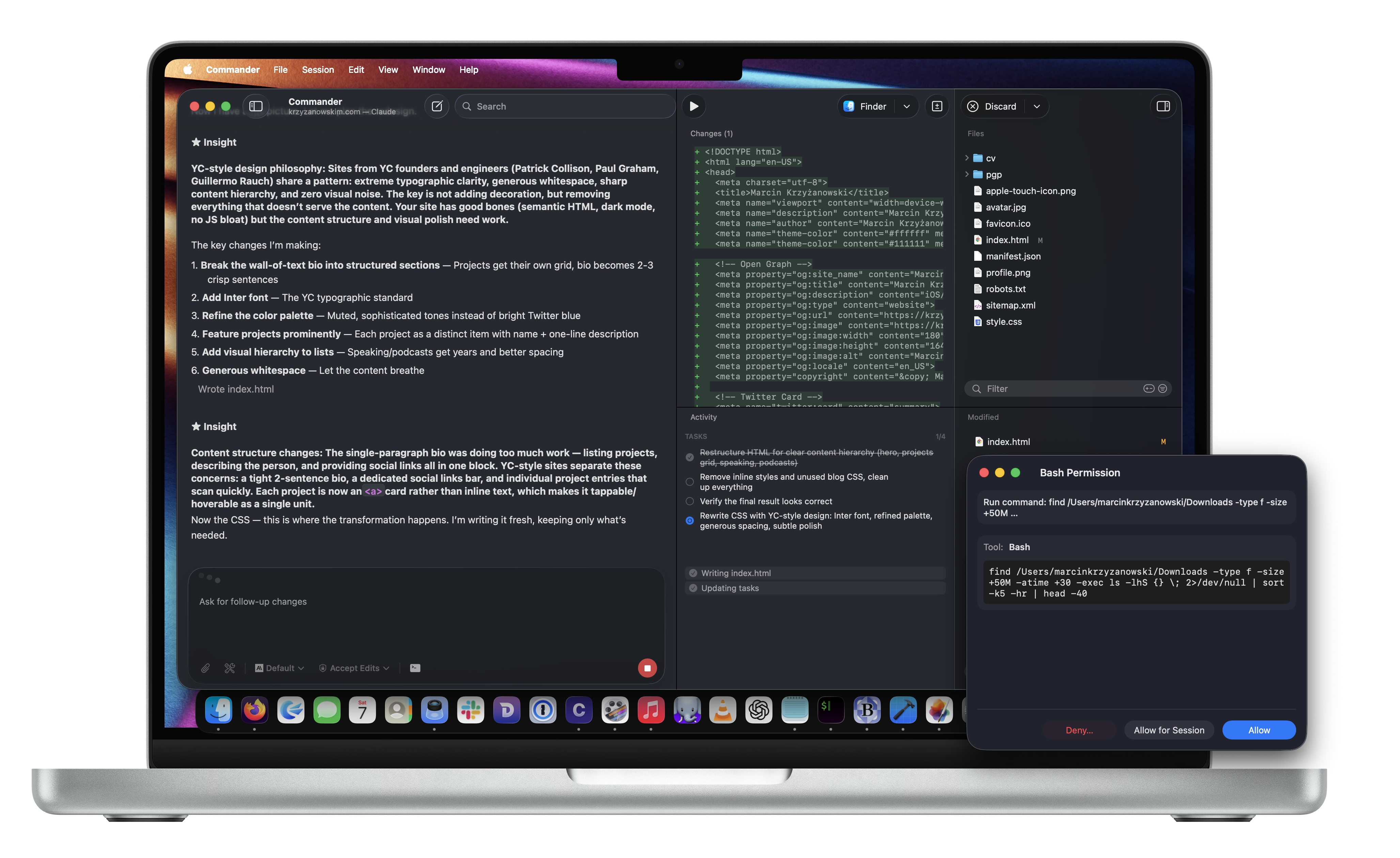

Commander

Commander looks very Mac native. If that aesthetic is your thing, it supports Claude, Codex, Pi, and Open Code. Standard chat interface, file tree, changes view. The basics are all there.

But I have not found a reason to pick it over any of the other options. It does not have a unique feature that pulls me in. I am not sure how actively it is being developed either. If someone is genuinely into this one, I would love to hear why. For now, it just does not have enough to stand out.

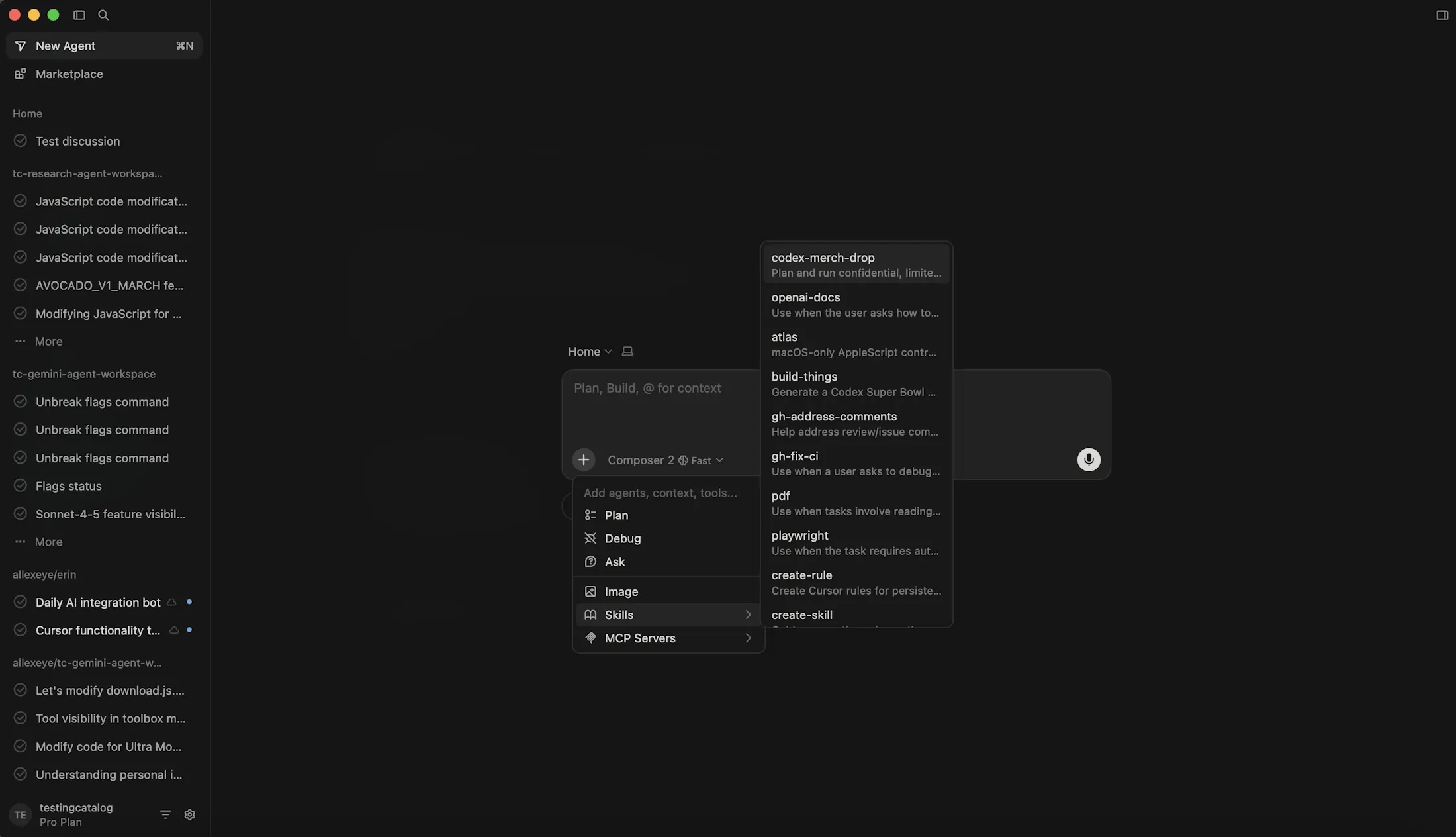

Claude Code desktop app

Not technically outside the main players, but worth flagging here since it often gets overlooked next to the flashier tools. Anthropic's desktop app is the one I recommend to less technical people who want an agent experience without the CLI. The UI is simple, you get the three latest Claude models, built-in connectors and skills, and now remote control from your phone.

For pure Anthropic users paying for Max, it is a great experience. The models are phenomenal for frontend work in particular. Opus 4.6 is probably the strongest model for UI/UX generation right now. Where it falls short is longer agentic tasks where the Codex line of models tends to be more methodical. But as a focused, low-friction way to use Claude Code without touching the terminal, it does its job well.

The broader point stands: even if some of these products do not win, they are still pushing the interface forward. More options, faster iteration, different bets on how much abstraction developers actually want. That is how a category matures.

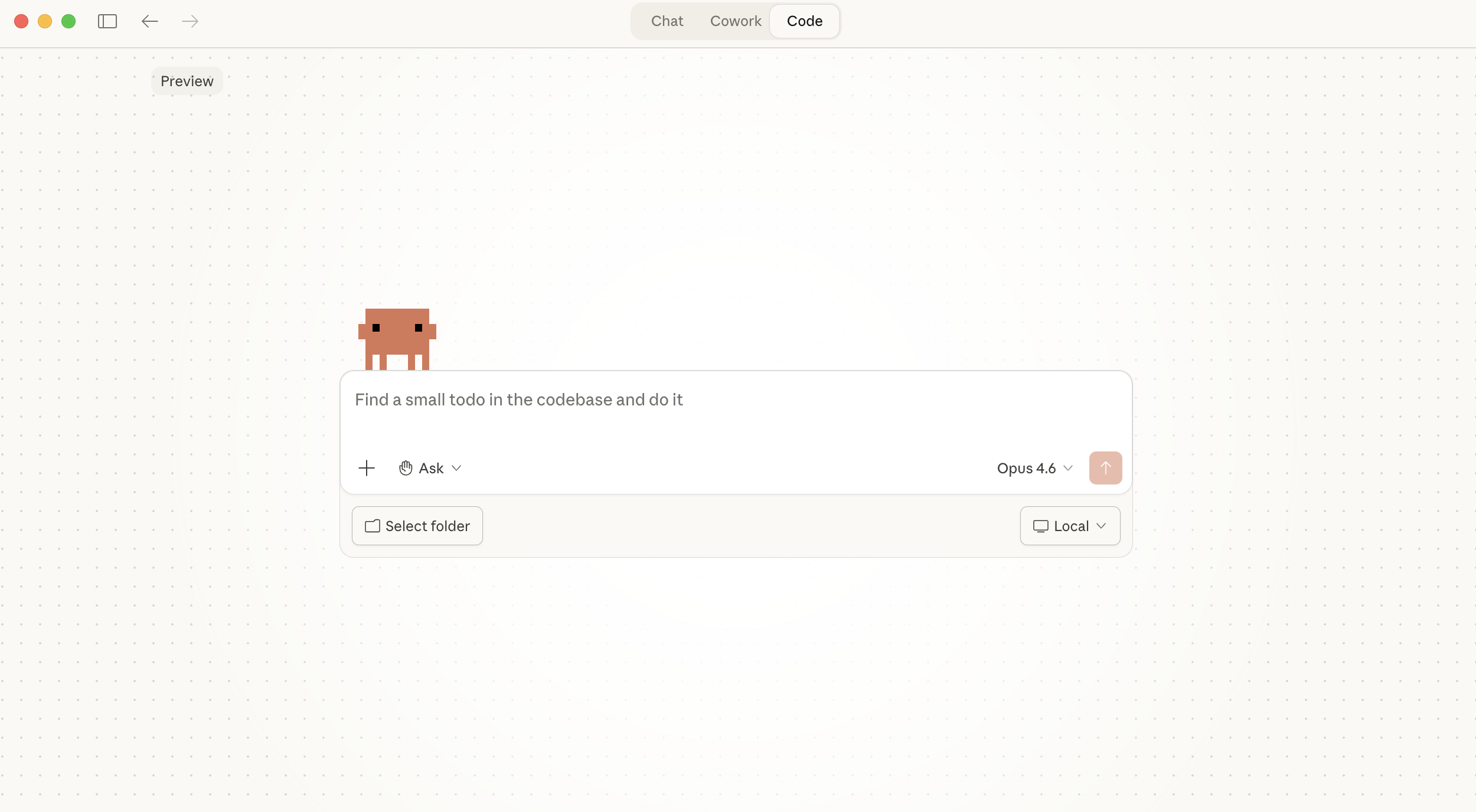

Capy

Capy literally came out. I have barely used it. But it is worth mentioning because the approach is interesting.

X post

my job as a swe rn is just me watching multiple agents work in parallel on @capydotai a single clean interface to control multiple agents. each agent has access to build, review (and testing soon) capy is the reason why our team has been able to consistently do more than 10 tasks in parallel every day

It runs entirely in the browser with cloud sandboxes. You get your own instance, spin up a dev server in a sandbox, and check out your app from the same environment. The agent can work while your laptop is off. It is the most "cloud-native" take on the ADE concept I have seen.

Right now you have to subscribe to their plan for inference. No bringing your own lab subscriptions. The free trial is fourteen days. I have not lived in it long enough for a definitive verdict, but the polish surprised me for something this early.

The category is real because the job is changing

I think a lot of people still talk about these tools as if they are just fancier wrappers around Claude Code or Codex.

That misses what is actually happening.

The reason ADEs are becoming real is that the role of the developer is changing in practical ways. Not disappearing. Not becoming obsolete. Just changing.

You still need taste. You still need judgment. You still need to understand systems, product trade-offs, and what good output looks like. But more and more, your leverage comes from being able to run several lines of engineering work in parallel, spot issues early, redirect energy, and keep momentum across a broader surface area than one person could manually type through.

Typing is not the bottleneck anymore. Orchestration is.

That is not IDE behavior. That is orchestration behavior. And orchestration deserves its own category.

My current take

If you force me to summarize the market in one sentence, it would be this: the best ADE is the one that matches your tolerance for abstraction.

I'm increasingly leaning towards the canvas approach for this.

X post

the canvas is where regular people will do advanced things with AI (and where advanced people do extraordinary things with AI)

tldraw on XThat feels right. It's the most flexible way to orchestrate multiple agents without getting boxed into a rigid UI.

If you want the most polished, guided experience, Conductor is still a strong recommendation.

If you want the best balance of flexibility, performance, and sensible UX, I would still lean Superset.

If you want OpenAI-native, Codex is great. If you want Anthropic-native with a friendlier app layer, Claude Code and its desktop experience are increasingly compelling. If you want raw terminal power with a bit more structure, tools like SoloTerm and CMUX still have a place.

But the bigger takeaway is not which tool is at the top this week. It is that the landscape is maturing so quickly.

That alone tells you the category is real.